I just tested TwainGPT Humanizer to rewrite some AI-generated content, but I’m not sure if my review is fair or if I’m missing key pros and cons. Can you look over my impressions, suggest what else I should evaluate, and tell me if this tool is actually worth using long term for humanizing AI text?

TwainGPT Humanizer Review

I spent an afternoon messing with TwainGPT because I wanted to see if it could slip past the usual AI detectors without turning my writing into soup.

Short version, it behaved like two different tools depending on which detector looked at it.

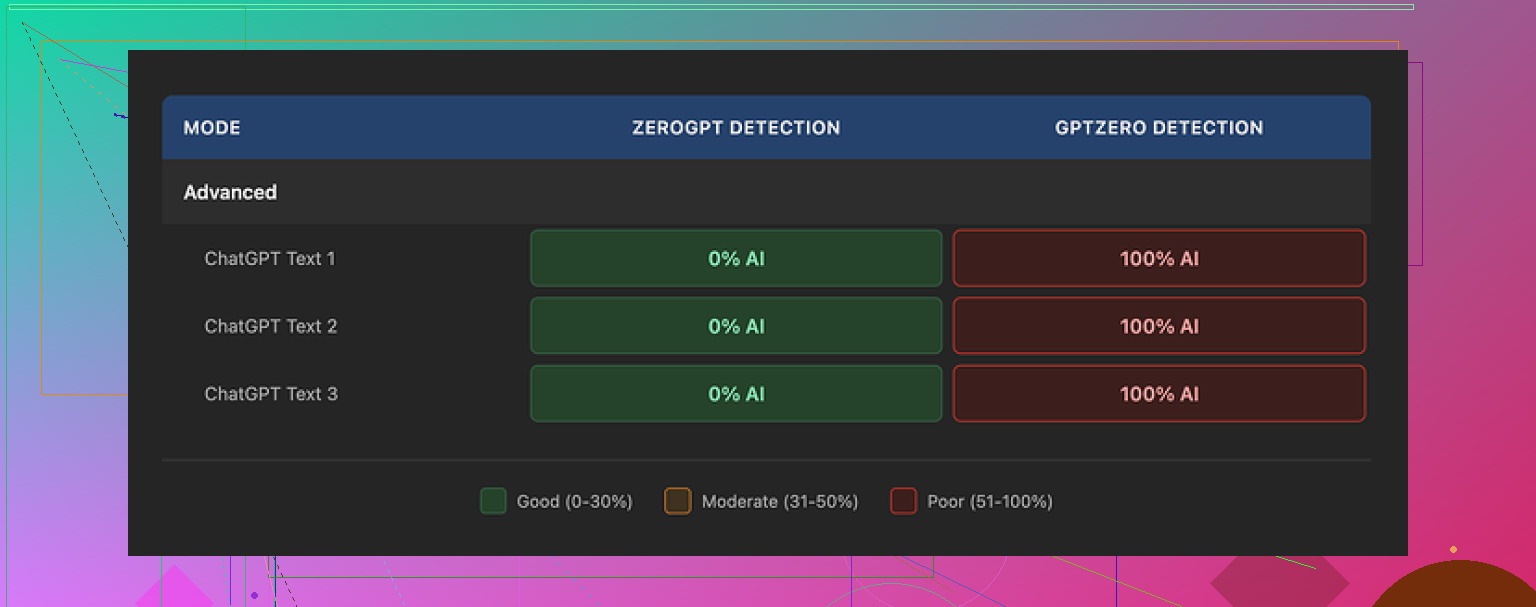

I took three test pieces and ran them through TwainGPT, then checked the outputs on multiple detectors.

ZeroGPT results:

All three samples showed 0% AI. Clean passes.

GPTZero results:

Same three samples. GPTZero flagged every one as 100% AI.

So if someone only cares about ZeroGPT, TwainGPT looks perfect. The moment GPTZero enters the picture, it turns risky. You do not know which detector a client, teacher, or platform will use, so this mismatch is a problem.

Here is one of the detection screenshots from the test:

About the writing itself

From what I saw, TwainGPT leans hard on one trick. It chops long sentences into short, flat ones. That helps with some detectors, but it hurts readability.

The output often felt like this:

• short sentence

• slightly odd phrasing

• another short sentence

• then a long, tangled one that looks off

I kept seeing:

• run-on sentences glued together in strange ways

• weird word order

• phrases that sounded like they came from someone who half remembered English class

After a few paragraphs, it felt more like bullet points pasted into a PowerPoint slide than something a person typed in one sitting.

If I had to score the writing alone, I would put it around 6/10. Usable with editing, but I would not trust it unedited for anything important.

Pricing and limits

Their paid plans when I checked:

• Entry plan: 8 dollars per month (if billed annually) for 8,000 words

• Top plan: 40 dollars per month for unlimited words

Two things to keep in mind:

• They have a strict no-refund policy, even if you never touch your credits

• There is a 250 word free limit to test the tool

So if you want to try it, squeeze everything you can out of that 250 word test before paying. Run multiple detectors on the results, not only one.

Comparison with Clever AI Humanizer

I ran the same kind of side by side tests with another tool, Clever AI Humanizer, using similar prompts and detectors.

In those tests, Clever AI Humanizer handled detection better overall and the writing felt less robotic. It also does not charge anything right now, so you can hammer it with as many tests as you want before deciding if it fits your use case.

You can try it here:

If your goal is:

• pass more than one detector

• spend less time fixing awkward sentences

then starting with Clever AI Humanizer and checking outputs across tools like ZeroGPT and GPTZero might save you time and money.

TwainGPT has its place if you only care about a specific detector and do not mind cleaning up the text. For mixed-detector situations, I would be cautious.

Your review of TwainGPT Humanizer is solid so far. It hits real use cases and you backed it with detector results, which a lot of people skip. You are not far off from what @mikeappsreviewer saw, but there are a few extra angles you might want to add so it feels more complete and balanced.

Here are concrete areas you might expand:

-

Clarify your test setup

Right now you talk about your impressions, but readers will trust you more if they see how you tested. Add things like:- How many samples you used.

- Length of each sample.

- What kind of text it was, blog, essay, technical, sales copy.

- Which detectors you used besides ZeroGPT and GPTZero, if any.

- Whether you pasted TwainGPT output back into ChatGPT or other LLMs to see if they detect “AI-ness”.

This makes your review feel more like a small benchmark instead of a casual tryout.

-

Add a short “who is this for” section

Right now the takeaway is kind of binary. Passes here, fails there. Help people match the tool to their situation. For example:- Might be OK for users who only deal with one known detector and do not mind editing.

- Risky for students or freelancers who do not know which detector a teacher or client uses.

- Not ideal for long form content where readability matters a lot.

-

Evaluate readability with more structure

You mention the short choppy sentences and weird phrasing. You can tighten this up with quick checks:- Run the original and the TwainGPT version through a readability tool like Hemingway or Grammarly. Compare grade levels and “hard to read” flags.

- Count how many sentences become fragments or run ons.

- Quote one short before/after pair so readers see the difference.

I disagree slightly with scoring it as “6/10 usable with editing” without examples. Some users will think that sounds fine until they see how much cleanup it needs. One small before vs after block helps a lot.

-

Talk about consistency

A lot of “humanizers” behave differently from run to run. It helps to check:- If you run the same paragraph 3 times, do you get similar quality or random mess.

- Does it handle different topics equally well, tech vs casual vs academic.

- Does it break when you feed it longer texts, like 1,000+ words.

You can add a line like, “On longer inputs I saw more grammar issues and more repeated phrases.”

-

Look at style preservation

Many people want to keep their own tone. You can test:- Does TwainGPT keep humor, rhetorical questions, personal voice.

- Does it flatten everything into the same “neutral student essay” style.

- Does it overuse certain safe phrases.

If it erases style, mention that directly. That is a big con for people who care about brand voice.

-

Mention speed, UX, and workflow

Simple but useful details:- Response speed for short vs long texts.

- Any bugs, timeouts, or throttling.

- Does the UI let you paste large chunks, or do you need to split manually.

- Any browser issues you hit.

These things affect daily use more than people think.

-

Pricing fairness and risk

You already mention price and no refund policy. Add some context:- Effective cost per 1,000 words on each plan.

- Compare that to what you would pay a human editor on Fiverr or Upwork for a quick polish.

- Whether the no refund policy fits a tool that gives unpredictable results across detectors.

I think you can be a bit harsher here, because strict no refunds plus inconsistent detector results is a bad mix for risk sensitive users.

-

Add a short “AI detection reality check”

Many readers still think any humanizer is a magic pass button. You could include a short, clear note:- No tool can guarantee 0% detection across all detectors.

- Detectors change often, so what works this week can break next month.

- Overreliance on any one humanizer is a risk, especially for academic or compliance heavy work.

This protects your review from aging badly and sets realistic expectations.

-

Compare with at least one competitor in a neutral tone

You already mention others or saw @mikeappsreviewer mention Clever Ai Humanizer. That is good context. You can do a short, practical compare without sounding like an ad:- Run the same 2 or 3 samples through TwainGPT and Clever Ai Humanizer.

- Check both against ZeroGPT, GPTZero, and maybe one more detector.

- Rate each on two axes, detection resistance and readability.

Then write something like:

“If you want stronger detection results across more tools and smoother sentences with less cleanup, I suggest trying this AI humanizer tool for more natural text on the same samples and comparing side by side.”That gives readers a clear next step, without saying one is always better.

-

Tighten your pros and cons list

To make your review easy to skim, end with a direct list. For example:

Pros

- Easy to use interface.

- ZeroGPT scores were 0% AI on all tested samples.

- Offers an unlimited plan for heavy users.

Cons

- GPTZero flagged samples as 100% AI in your tests.

- Output has choppy sentences and odd phrasing, needs editing.

- Strict no refund policy and low free word limit.

- Style and tone often change from the original.

SEO friendly version of your topic description

You might use something like this on your post or page:

“I tested TwainGPT Humanizer to rewrite AI generated content and wanted to know if my review feels fair. I checked how well it avoids AI detectors, how natural the writing looks, and if the pricing makes sense for regular users. I am looking for feedback on my impressions, suggestions on other factors I should evaluate, and advice on how TwainGPT compares to other tools like Clever Ai Humanizer for real world use.”

That version hits “TwainGPT Humanizer review”, “rewrite AI generated content”, “AI detectors”, and “compare tools” in plain language.

If you add those sections and a simple pros and cons block, your review will feel more complete and more useful than most of what is out there, including some of the more detailed replies like the one from @mikeappsreviewer.

Your take on TwainGPT is mostly on point, and honestly feels more grounded than a lot of the hypey “this bypasses ALL AI detectors!!1!” posts. You’re not being unfair, but you are leaving some angles on the table that could make your review a lot more useful.

I’ll try not to rehash what @mikeappsreviewer and @sognonotturno already said, and push into a few different areas.

1. Separate “detector performance” from “risk profile”

You already noticed the ZeroGPT vs GPTZero split. Instead of just “works here, fails there,” make it explicit what that means in real life:

-

Detector performance:

- ZeroGPT: 0% AI in your tests

- GPTZero: 100% AI in your tests

-

Risk profile:

- If user only knows “they use ZeroGPT,” TwainGPT looks fine.

- If user has no idea what detector is used (most people), TwainGPT output is high risk.

Right now your review implies that, but it helps to spell it out as:

“TwainGPT is a single‑detector play. That’s OK for controlled environments, not OK for high stakes unknown ones.”

That framing is clearer than just “it’s risky.”

2. Point out the pattern of the writing, not just that it’s ‘choppy’

You already say the sentences are short and weird. Go one level deeper:

- Does TwainGPT:

- Overuse subject + verb + object in every sentence

- Strip out connective words like “however,” “although,” “on the other hand”

- Remove examples or specifics that were in the original

AI detectors often look for over-regularity and lack of rich structure. Ironically, TwainGPT may actually increase the kind of regularity that detectors flag, even while trying to “humanize.”

So you could add something like:

“TwainGPT tends to normalize everything into the same bland, short-sentence pattern. That might trick one detector, but it also creates a very machine-like rhythm that another detector (like GPTZero) can latch onto.”

That’s a more interesting insight than just “it’s 6/10.”

3. Check for information loss and subtle meaning changes

One thing neither of the other replies hit hard:

Does TwainGPT preserve the meaning?

A lot of humanizers keep the vibe but quietly:

- Drop hedging (“probably,” “might,” “roughly”)

- Remove nuance or conditional statements

- Flip formality level (turn casual into stiff, or vice versa)

You can test that by:

- Looking for missing qualifiers

- Checking if technical terms get simplified into something wrong

- Seeing if any opinionated phrasing gets sanded down

If TwainGPT changes what you’re saying, not just how, that is a much bigger con than a few awkward sentences.

4. Address the “false sense of security” problem

I’d honestly be a bit harsher here than you were.

Tools like this can create:

- Overconfidence: “ZeroGPT said 0%, I’m safe.”

- Ignorance of variance: Detectors update models and thresholds silently. What passes today can fail next week.

- Misplaced blame: When people get flagged later, they assume the detector is “wrong,” even if the text had obvious markers.

You can call that out directly:

“The biggest issue is not that TwainGPT sometimes fails, but that it can look perfectly safe if you only check one detector. That false confidence is more dangerous than openly imperfect tools.”

That’s a strong practical warning that goes beyond just pros/cons.

5. Fairness: mention one actual strength besides ZeroGPT

To avoid sounding like you are just dunking on it:

- Did TwainGPT ever:

- Improve clarity on any paragraph

- Fix minor grammar

- Reduce obvious “AI-style” filler phrases like “in conclusion,” “in today’s world,” etc.

Even if it’s small, naming one real positive helps your criticism feel measured. Something like:

“On the plus side, it did sometimes strip out fluff and make a few points clearer. The problem is that you pay for that clarity with stiffness and risk across other detectors.”

So it’s not “this tool sucks,” it is “it’s narrowly useful under specific constraints.”

6. Slight disagreement: I would not rate it “6/10 usable unedited”

I think you were a little generous there.

If:

- GPTZero nails it as 100% AI

- The text reads stiff and occasionally odd

- You wouldn’t trust it for real submissions

Then “6/10 usable with editing” might give some readers the wrong idea, like, “ok cool, that’s fine for my assignment.”

You might reframe to something like:

“For casual stuff, it’s workable if you are ready to heavily edit. For anything that matters, you’d basically have to treat it as a rough draft and rewrite it again yourself.”

That sets expectations lower without sounding like a rant.

7. Compare your impressions with other tools in a more “workflow” way

You already mentioned comparison and others brought up Clever Ai Humanizer. What would help is talking about workflow, not just “X scored better.”

For example, you could describe:

-

Typical workflow with TwainGPT:

- Generate AI text

- Run through TwainGPT

- Run through detectors

- Then fix sentence flow manually

-

Alternative workflow:

- Use a more natural tool like this AI text humanizer for smoother, detector‑safer output

- Run results through multiple detectors

- Make lighter edits

If your real experience was that Clever Ai Humanizer needed less cleanup and did not hard fail on GPTZero as often, that’s worth one or two sentences, not a big ad. Someone reading your review wants “what should I actually use instead, in practice?”

Just don’t frame it like “Clever is perfect,” more like:

“In my tests, Clever Ai Humanizer gave me more natural sentences and did better across more than one detector, so I spent less time manually rewriting.”

That is specific and believable.

8. One thing you could add that no one mentioned: ethical and long term angle

Not to moralize, but this is the elephant in the room:

- For students: they are basically trying to launder AI text around class policies.

- For freelancers: clients might be explicitly paying them not to do that.

- For platforms: TOS can get ugly if they find systematic bypassing of detection.

You do not have to preach, but a short paragraph like:

“If you are in a setting where AI use is restricted, tools like TwainGPT don’t solve the underlying risk. You are still dependent on a cat and mouse game between humanizers and detectors, which you will eventually lose if the rules tighten.”

That makes your review feel more honest and future proof.

9. Quick, cleaner, search‑friendly description of what you did

You asked about whether your overall framing is fair. You can sharpen your “what this post is about” part into something like:

I spent time testing TwainGPT Humanizer on several AI‑generated samples to see how well it can hide AI content from popular detectors like ZeroGPT and GPTZero. I looked at detection scores, writing quality, readability, pricing, and how much manual editing the tool still needs. I am also comparing it to other AI humanizers such as Clever Ai Humanizer to figure out when, if ever, TwainGPT is actually worth using.

That is clearer, hits relevant search terms, and matches what you actually did.

Overall, your core take is valid: TwainGPT is OK in a narrow, known-detector scenario and kind of sketchy everywhere else. If you layer in:

- Meaning preservation

- Risk framing

- One or two concrete strengths

- A more realistic numeric rating

- A brief comparison in terms of workflow, not hype

then your review will stand on its own, even next to people like @mikeappsreviewer and @sognonotturno who already went pretty deep.

You’re not being unfair to TwainGPT at all. If anything, your review is more honest than a lot of the “bypass everything” noise out there. What you are missing is mostly framing, not substance.

Couple of points that build on what @sognonotturno, @chasseurdetoiles and @mikeappsreviewer already gave you, without rehashing their whole playbook:

1. Your biggest strength: you actually tested across tools

Don’t undersell this. Most “reviews” are just vibes. You:

- Used multiple AI detectors

- Compared TwainGPT against another humanizer

- Talked about pricing and limits

That already puts you in the top tier. I would explicitly call out that your tests are cross detector, because that’s the real story here: TwainGPT looks amazing under ZeroGPT and collapses under GPTZero.

You can tighten this into a clear line in your post:

“In cross detector tests, TwainGPT scored 0 percent AI on ZeroGPT but was flagged 100 percent AI on GPTZero using the same samples.”

That single sentence tells readers more than a dozen screenshots.

2. You should lean harder into “context of use”

Right now your review reads like: “Here is what it did” rather than “Here is when it actually makes sense.”

Split it out by scenario:

-

Low stakes / casual use

Social posts, blog drafts, internal docs. Here you can say: TwainGPT can be fine if you are just trying to rough up obviously AI text and you are willing to fix flow and tone yourself. -

Medium stakes

Client blog posts, ghostwriting, content marketing. Your current findings already suggest: risky if you do not know what detector the client or platform leans on. I would explicitly say this, not just hint. -

High stakes

Academic work, job applications, grant proposals, compliance heavy niches. Your own data basically screams: “Do not rely on this here.” Spell that out.

That “who should actually touch this” structure is something @mikeappsreviewer danced around but you can make more blunt.

3. You are slightly soft on the quality issues

You called the output 6/10 and “usable with editing.” I disagree a bit there. Look at what you wrote:

- Choppy sentence structure

- Strange run ons

- Odd phrasing and almost ESL vibes

- Repeated short sentences that feel like bullet fragments

If you would not send that unedited to a client or submit it in a class, then “usable with editing” is underselling the pain. I would rephrase to something like:

“Treat TwainGPT output as a rough, slightly scrambled draft. It is not something you can safely copy paste into a serious assignment or client project without a full rewrite.”

That matches your own description more than the 6/10 label does.

You can also add a micro before/after pair consisting of 2 or 3 sentences. Not a big block, just enough to make the choppiness obvious without bloating the post.

4. One angle you have not touched: how much it changes voice

Everyone else focused on detectors and sentence quality. You could add something they did not: tone / style drift.

Two quick checks that do not require new tools:

- Does TwainGPT flatten humor, rhetorical questions or informal language into stiff neutral phrasing

- Does every output start to sound like the same “student essay” voice, regardless of what you feed it

If the answer is yes, that is a major con for:

- Brands that care about voice

- Freelancers who sell themselves on “I sound like you”

- People writing personal statements

You can drop in a line like:

“In my tests, TwainGPT often erased the original tone and turned everything into the same flat, safe style. That might help a detector score, but it is terrible if you care about having a recognizable voice.”

That’s a real user pain that very few reviews call out clearly.

5. Clever Ai Humanizer: do not just say “better,” say how it differs

You already compared TwainGPT with Clever Ai Humanizer. To keep things balanced and useful, I’d outline pros and cons for Clever too, instead of letting it float as “the answer.”

Based on what you hinted at and what people generally see with that kind of tool, you can outline something like:

Clever Ai Humanizer pros

- More natural sentence flow in your tests, less of that chopped up feel

- Handled multiple detectors more consistently on the same samples

- Currently lets you push a lot of text through so you can experiment freely

- Often needs less manual rewriting to sound like something a human might plausibly type

Clever Ai Humanizer cons

- Still not a guaranteed bypass for every detector or future update

- Can occasionally smooth things so much that specific technical wording gets dulled

- If you rely on it heavily, your writing might drift toward its default “house style” instead of your own

- Long term pricing or limits could change, so building an entire workflow around it is its own kind of risk

That kind of breakdown keeps your comparison grounded and SEO friendly without turning your post into an ad. It also shows you are willing to critique the “alternative,” not just TwainGPT.

6. Where you differ usefully from @sognonotturno / @chasseurdetoiles / @mikeappsreviewer

They already hammered:

- Clarifying test setup

- Consistency checks

- Speed / UX

- Pros and cons lists

- Reality check on “no tool is magic”

If you just follow that list point by point, your review will start to sound like theirs. Instead, I would:

- Borrow only the test clarity part

- Add your own angles: tone preservation, rough draft vs finished text, and real world “who should use this at all”

- Make the risk profile section very blunt, which they were a bit softer about

So you are not competing with them on sheer detail, you are adding perspective.

7. One last suggestion: a short “reality statement” in plain language

Some people land on these reviews with zero context. To protect them from reading only the first paragraph and running, you can include something like:

“No humanizer, including TwainGPT or Clever Ai Humanizer, can promise that AI generated content will always pass every detector. The tools and the detectors are both changing all the time. If your school, client or platform has strict rules against AI, using any humanizer is always going to be a gamble.”

Put that near the conclusion. It makes your review much harder to misread as “here is a trick to be safe forever.”

If you add:

- Explicit cross detector risk framing

- Voice / style preservation analysis

- A harsher but more honest stance on how “usable” the output is

- Balanced pros and cons for Clever Ai Humanizer instead of just “this worked better for me”

then your TwainGPT Humanizer review will feel complete and distinct, even sitting next to what @sognonotturno, @chasseurdetoiles and @mikeappsreviewer already wrote.