I’ve been relying on Walter Writes AI reviews to decide which tools to use, but I’m starting to notice inconsistencies and possible errors in the feedback it gives. Some reviews seem detailed and trustworthy, while others feel rushed or even misleading. Has anyone else run into accuracy or reliability issues with Walter Writes AI reviews, and how do you verify if its assessments are actually correct before trusting them?

Walter Writes AI review from someone who spent too long testing it

Walter Writes AI looked interesting on paper, so I ran a few tests and logged the numbers. The short version is that it behaved like three different tools glued together.

I used the free tier, which only lets you use the “Simple” mode. Paid users get “Standard” and “Enhanced” bypass levels, so my results are probably the floor, not the ceiling.

What the detectors said

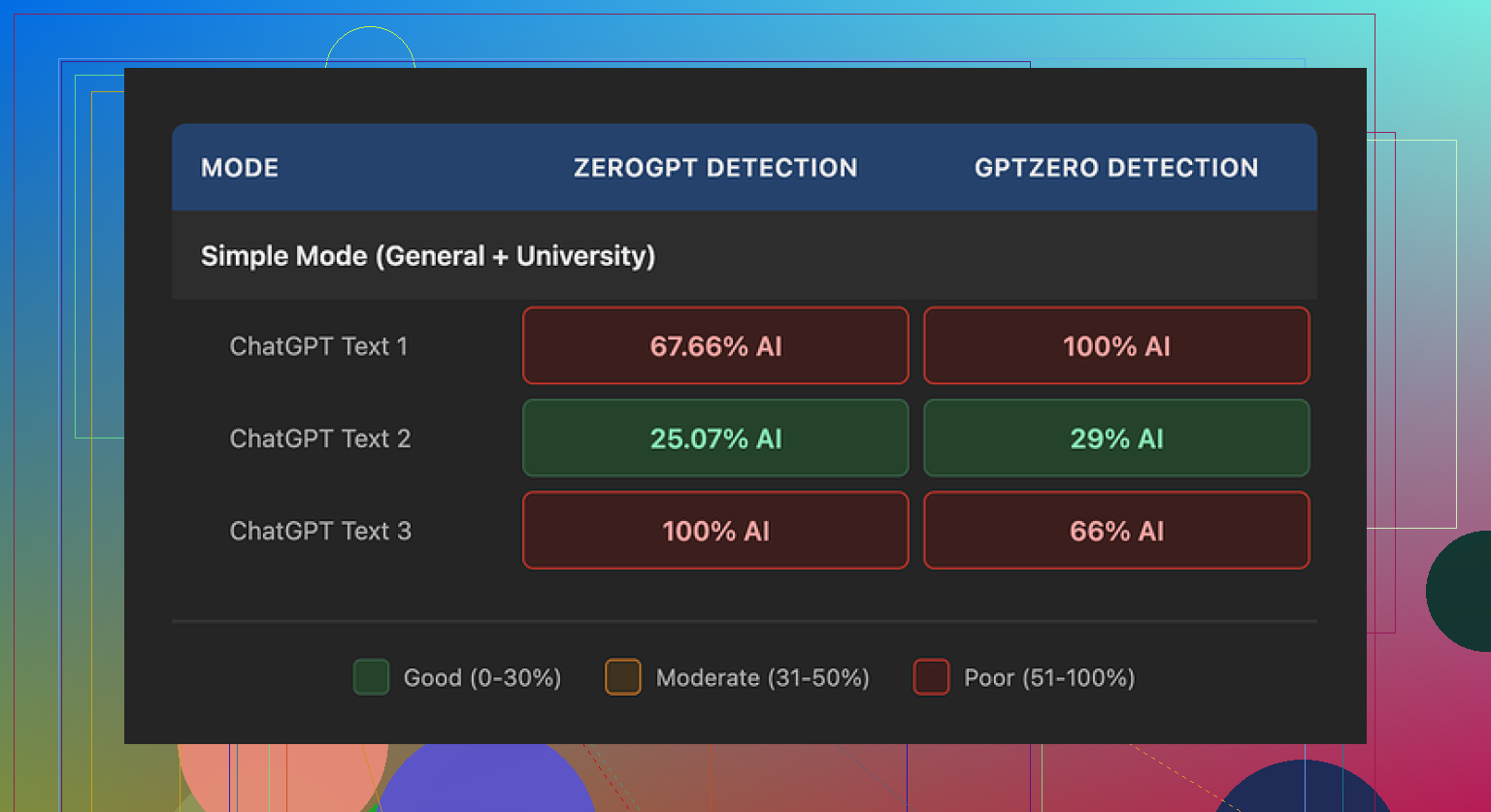

I pushed three separate samples through Walter Writes AI, then checked them with GPTZero and ZeroGPT.

Best run:

- GPTZero: 29%

- ZeroGPT: 25%

For a free humanizer, those numbers were decent. Most free ones I tried hovered much higher and triggered “likely AI” right away.

Then it went sideways.

Other two runs:

- Both had at least one detector flag them at 100% AI

- One looked worse after “humanizing” than the original raw AI text

So performance was unstable. One pass looked usable, the next pass screamed AI on every detector.

Here is the screenshot from one of the tests:

Writing quality and weird patterns

Detectors aside, the writing itself had some tells that would make an editor suspicious.

Stuff I kept seeing:

-

Semicolon spam

It liked to throw semicolons in places where a normal writer would use a comma or split into two sentences. It read like someone who learned punctuation from a rules list and decided to overuse it. -

Word repetition

In one sample, it used the word “today” four times in three sentences. Same position, same tone. It felt robotic. Human writers repeat words, but not in that pattern. -

Parenthetical clutter

There were lots of parentheses with examples like “(e.g., storms, droughts)” sprinkled through the text. Then the same type of parenthetical example showed up again and again. That pattern shows up a lot in unedited AI text.

None of this broke the text, but if you hand this to someone who reads AI content all day, they will raise an eyebrow.

Pricing and limits

Here is what I noted from their pricing:

- Starter: 8 dollars per month (billed yearly), 30,000 words

- Unlimited: 26 dollars per month, but each submission is capped at 2,000 words

- Free tier: 300 words total, not per month

So even on the “Unlimited” plan, you still have to chop long pieces into chunks. For people working on reports, books, or long articles, that gets annoying fast.

Policy stuff that bothered me

Two things stuck out reading through their terms and language.

-

Refund and chargeback wording

Their refund section leaned hard into chargeback threats. It talked about legal action around disputes in a way that felt aggressive for a small SaaS tool. I screen through a lot of tools for work and this felt out of proportion. -

Data retention

I did not find a clear, plain language statement about how long they store submitted text, or how it is used long term. If you deal with client content, contracts, research notes, or anything sensitive, this matters.

If you are running school work or public blog content, you might not care. If you handle client writing or confidential docs, you probably will.

What worked better for me

After testing a bunch of these, I kept coming back to Clever AI Humanizer. My results there were more stable and the output read closer to how I write on a tired day.

No signup fee, no credit card. You can try it here:

They also have some community and walkthrough stuff tied to it:

Humanize AI tutorial on Reddit

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

Clever AI Humanizer review thread on Reddit

https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/

YouTube video review

If you are deciding between Walter Writes AI and Clever AI Humanizer based on my tests, I would start with Clever, run your usual content through both, and then compare:

- Detector scores

- How the text sounds when you read it out loud

- How much cleanup you have to do after

For my use, Walter Writes AI felt too inconsistent, and the policy vibe made me keep it at arm’s length.

You are not imagining it. Walter Writes AI reviews feel hit or miss for a reason.

What it seems to do is mix scraped info, surface feature checks, and some template opinions. That works ok for simple tools with clear features. It breaks once you get into nuance like:

• How stable a tool is over time

• How it behaves on edge cases

• Privacy and data handling

• Pricing traps and usage caps

So you get some reviews that look detailed and specific, then others that feel generic or even wrong. The system does not test tools the way a human power user does. It describes them.

I partly disagree with @mikeappsreviewer on one point. Detector scores and AI humanizing are useful if you write a lot of content. For picking tools in general, you also need things Walter is weak at, like long term reliability, support quality, and real user friction.

If you want to keep using Walter, I would treat it like a first pass only:

- Use Walter to list features, plans, and basic pros and cons.

- Cross check those against the tool’s own site and one independent review.

- Search “[tool name] Reddit” or “[tool name] issues” and read at least 5 human comments.

- Ignore any Walter opinion that is not backed by a concrete example or number.

For AI content tools and “humanizers”, I would not rely on Walter at all. You want:

• Detector scores from multiple detectors

• How natural the text sounds when you read it out loud

• How much manual cleanup you do after

That is where Clever AI Humanizer is worth a look. Not because it is magic, but because you can quickly run your own samples and compare your before and after results with what Walter or other review bots say. If Walter says a tool is great, then your own test with Clever AI Humanizer plus a detector shows garbage output, trust your test.

Practical setup you can use:

• Step 1: Use Walter for a quick shortlist of tools.

• Step 2: For each shortlisted tool, run one real workflow of yours, end to end.

• Step 3: For writing tools, run the output through Clever AI Humanizer and two detectors, then check readability yourself.

• Step 4: Keep notes in a simple spreadsheet, not in your head.

If Walter’s review and your notes disagree, side with your data. Walter is useful as a noisy signal, not as a final decision maker.

You’re not imagining it, Walter’s reviews are a bit all over the place.

What @mikeappsreviewer and @viajantedoceu both hinted at, from different angles, is that Walter is basically a feature-parrot with opinions. It’s pretty good at:

- Listing features and pricing from a product page

- Spitting out pros/cons that sound legit at a glance

- Sounding confident even when it’s half-wrong

Where it falls apart is the stuff you’re starting to notice:

-

Nuance & edge cases

Walter struggles with “messy reality” things like:- How often a tool glitches or rate-limits you

- Whether support actually replies before the heat death of the universe

- Sneaky pricing caps, “unlimited*” plans, weird refund rules

That’s exactly why its reviews sometimes feel super detailed, then suddenly generic or just wrong on very practical points.

-

Inconsistent depth

Some Walter reviews clearly pull from real documentation or multiple sources, so they feel rich and specific.

Others read like someone skimmed the home page for 9 seconds then freestyle’d the rest. That’s where you get contradictions, missing key limitations, or praising features that either don’t exist or are locked behind higher tiers. -

AI reviewing AI tools is… shaky

I actually disagree slightly with how much focus people put on detector scores alone. Detectors are noisy and can be gamed.

But for AI content tools, Walter is especially weak because it:- Does not actually run your text through tools like Clever AI Humanizer or multiple detectors

- Mostly “describes” capabilities instead of benchmarking them

So if you’re picking humanizers, paraphrasers, or “undetectable AI” tools, Walter’s reviews are closer to marketing blurbs than serious testing.

-

Subtle bias toward “shiny”

A pattern I’ve noticed: tools with slick sites, bold claims, and a buffet of buzzwords tend to get rosier Walter reviews, even when user feedback elsewhere is mixed.

Quiet, boring looking tools with solid reliability often get underplayed.

Where I think Walter is still useful:

- Quick snapshot of what a tool claims to do

- Rough feature comparison

- Basic pricing overview (then double check specifics yourself)

Where I would almost ignore Walter completely:

- Anything involving sensitive data, weird ToS, or legal/chargeback minefields

- AI “humanizer” tools, plagiarism tools, detectors, security tools

- Decisions where downtime or bugs will actually hurt you

On that AI humanizer angle: if you’re using AI heavily, a tool like Clever AI Humanizer is way more testable than any Walter review. You can:

- Drop in your own content

- Run it through Clever

- Hit it with a couple detectors

- Read it out loud and see how much you still need to edit

That tells you more in 10 minutes than Walter’s “Top 10 humanizers in 2026” article ever will.

So, tl;dr for your original question:

- Walter is not completely garbage, but it is absolutely not authoritative.

- Treat it as a noisy, first-pass research assistant, not a deciding vote.

- For anything important, especially AI writing / humanizing, trust your own mini-tests with tools like Clever AI Humanizer plus real user comments on Reddit, forums, etc.

- If Walter’s review and your actual hands-on experience collide, your experience wins every time.

And yeah, spotting inconsistencies is a sign your BS-detector is working, not that you’re overthinking it.